With the increasing computational capabilities of microcontroller

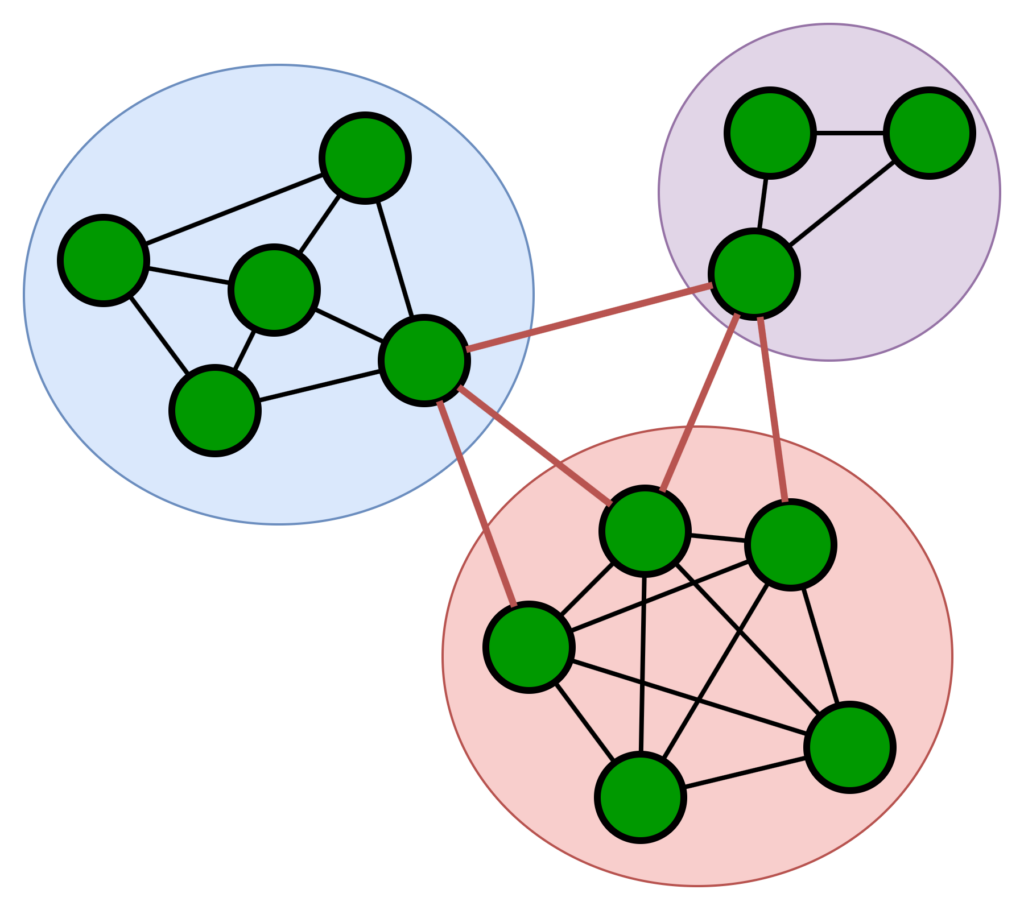

units (MCUs), edge devices can now support on-device machine learning. However, deploying decentralised federated learning on such devices presents significant challenges, including intermittent connectivity, limited communication range, and strict memory constraints. This paper presents a bilayer gossip-based

decentralised learning framework, termed Gossip Decentralised Parallel Stochastic Gradient Descent (GD-PSGD), designed for dynamic mobile edge environments without a central coordinator. The framework combines Distributed K-means clustering for geographic grouping with a memory-efficient cumulative aggregation scheme, enabling effective intra-cluster and inter-cluster model exchange with minimal storage overhead. We evaluate the proposed approach against a Centralised Federated Learning (CFL) baseline using the MCUNet model on

the CIFAR-10 dataset under IID and Non-IID data distributions. Results show that the proposed method achieves comparable accuracy to CFL under IID conditions, requiring only 1.8 additional rounds to converge. Under moderate Non-IID settings, the accuracy degradation remains below 8%. These results demonstrate the feasibility of scalable and privacy-preserving decentralised learning on MCU-class edge devices with limited performance and memory overhead.

IEEE PErvasive Computing Journal, Accepted.