The goal of the EDGE AI FOUNDATION is to build awareness and share best practices for implementing Edge AI solutions. The first deliverable for the community’s recently-formed Commercialization Working Group is a white paper that breaks down a comprehensive taxonomy for the Edge AI continuum, key considerations for real-world implementation, typical use cases for different edge locations, and a solution matrix with tips for selecting commercial partners.

The dream scenario? A unified toolkit that makes it easy to securely build, deploy and manage AI models anywhere along the edge continuum, with the same agility as we have today in the cloud. This means having tools that are flexible, interoperable and secure but tailored for the specific needs of the target hardware. This will enable developers to create AI models that can run efficiently wherever they need to along the edge continuum based on a balance of performance, cost, security, and privacy. To make this happen, we need an “orchestrator of orchestrators” model that ties all the inherently different underlying tools together.

As for AI workloads, the idea is to standardize tools so models can be built once and used in various edge locations, while still keeping in mind the technical challenges. While today edge AI models typically perform inference, we’re likely to see more generative AI and decentralized training applications popping up.

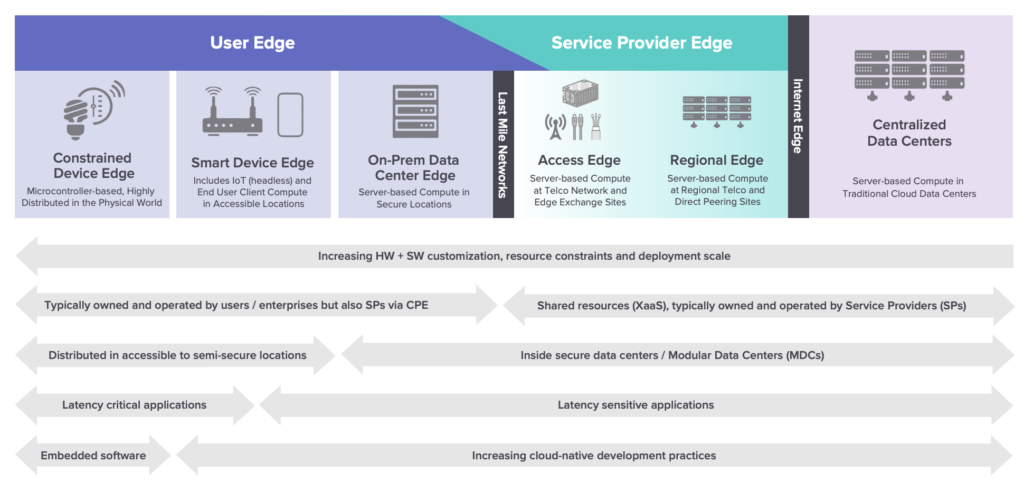

The paper will introduce the EDGE AI Taxonomy, which the community has adapted from the Linux Foundation’s LF Edge organization. The taxonomy helps categorize different edge computing paradigms based on inherent technical and logistical tradeoffs spanning regional edge data centers to resource-constrained devices in the field. It emphasizes the need for a solid strategy when building out Edge AI solutions, considering both needs in both the application and infrastructure planes. It will also outline how security concerns, operational needs, and the complexity of deployments differ between IT and operational technology (OT) organizations.

LF Edge Taxonomy (Source: 2020 “Sharpening the Edge” White Paper)

The paper will have a strong focus on cybersecurity, including the importance of a zero-trust model because edge devices often lack well-defined security perimeters like traditional data centers. There will be a thorough analysis of considerations when selecting commercial solution partners for scalable Edge AI implementation, with specializations in AI, hardware, security, management and orchestration, and services. It will close with stressing the importance of industry collaboration to streamline AI deployment across the edge.

The edge is a complex landscape, but with the right tools and strategies, we can make it seamless to deploy AI across many types of environments and devices. Stay tuned for the full paper which will be released prior to EDGE AI FOUNDATION’s San Diego event, March 24-26!